Part 1: Comprehensive Exploration of the Chosen Prompt Pattern

Definition

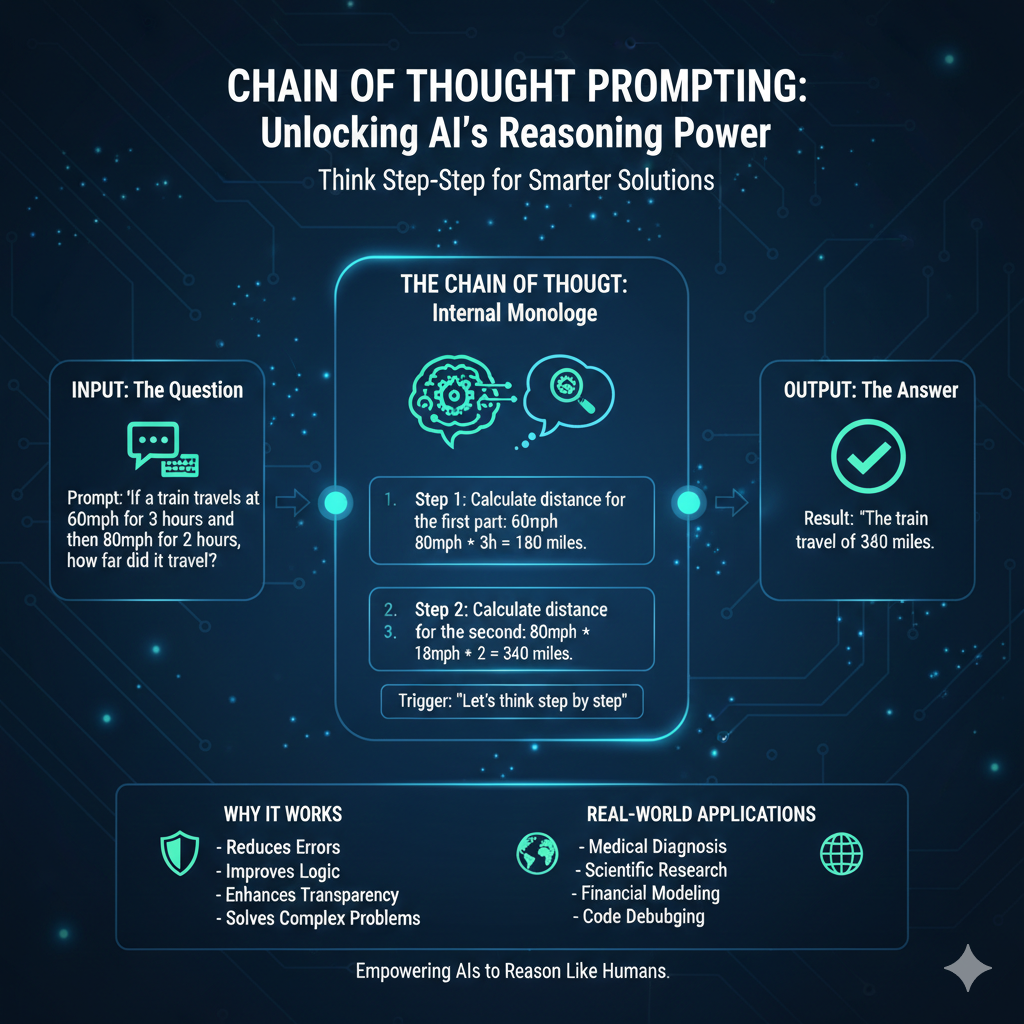

The **Chain of Thought (CoT)** prompt pattern is a technique that encourages Large Language Models (LLMs) to generate intermediate reasoning steps before arriving at a final answer. Instead of a direct "Input $\rightarrow$ Output" flow, it creates an "Input $\rightarrow$ Reasoning $\rightarrow$ Output" flow.

Core Concept 1

Intermediate Reasoning Tokens: LLMs work by predicting the next word. By forcing the model to write out its "work," those logical steps become part of the visible text (context). The model then uses its own logical "scratchpad" to inform the final conclusion.

Core Concept 2

Logical Grounding: This concept ensures that the final answer is a mathematical or logical consequence of the previous steps, rather than a statistical guess based on the phrasing of the question.

Purpose & Significance

In the field of NLP, CoT is significant because it overcomes the "calculation gap" in transformer models.

- Impact on Performance: It dramatically reduces "hallucinations" (confident but false statements) in multi-step problems like math, symbolic logic, and strategy.

- User Interaction: It builds trust. When a user can see why an AI arrived at a conclusion, they can audit the logic. It transforms the AI from a "Black Box" into a "Transparent Partner."

Significance in Real-World Applications

Example: Medical Diagnostic Support. In clinical settings, an AI might be asked to suggest a treatment plan based on a patient's history. Without CoT, the AI might suggest a drug instantly. With CoT, the AI first lists the patient's allergies, then the drug's contraindications, and finally concludes whether the drug is safe. This "step-by-step" breakdown allows doctors to spot a single logical error before a prescription is ever written.

Part 2: Demonstrating the Prompt Pattern Through Examples

Scenario Description: Sustainable City Planning

Case StudyA community organizer is trying to determine the feasibility of going 100% solar for a small housing development. This requires accurate math and the consideration of environmental variables that are often overlooked.

Prompt 1: The Standard "Direct" Prompt

Prompt: "How many 400W solar panels do I need to power a village of 50 homes that each use 30kWh per day?"

Prompt 2: The "Chain of Thought" Prompt

Prompt: "How many 400W solar panels do I need to power a

village of 50 homes that each use 30kWh per day? Let's think step by step.

Calculate the total daily energy need for the village.

Assume an average of 5 peak sun hours per day to find the required system capacity.

Factor in a 25% efficiency loss for inverters and wiring.

Divide by the panel wattage to get the final count."

Step 2: We have 5 sun hours to generate this. 1,500kWh / 5 hours = 300kW required capacity.

Step 3: To account for a 25% loss, we need a larger system. 300kW / 0.75 = 400kW total system size.

Step 4: 400,000W / 400W per panel = 1,000 panels.

Final Answer: You need 1,000 solar panels.

Analysis of Model Responses

Comparison: Prompt 1 is mathematically incorrect for a real-world scenario because it assumes panels produce power 24 hours a day. It "hallucinates" a high number by ignoring environmental physics. Prompt 2 is accurate and provides a "paper trail" for the user.

Strengths: Prompt 2 forces the model to respect "Peak Sun Hours," which is the most critical variable in solar planning.

Potential Improvements: The user could further improve this by asking the AI to "Check for errors in the previous step before moving to the next one," which is a technique called Self-Verification CoT.

Creative Presentation

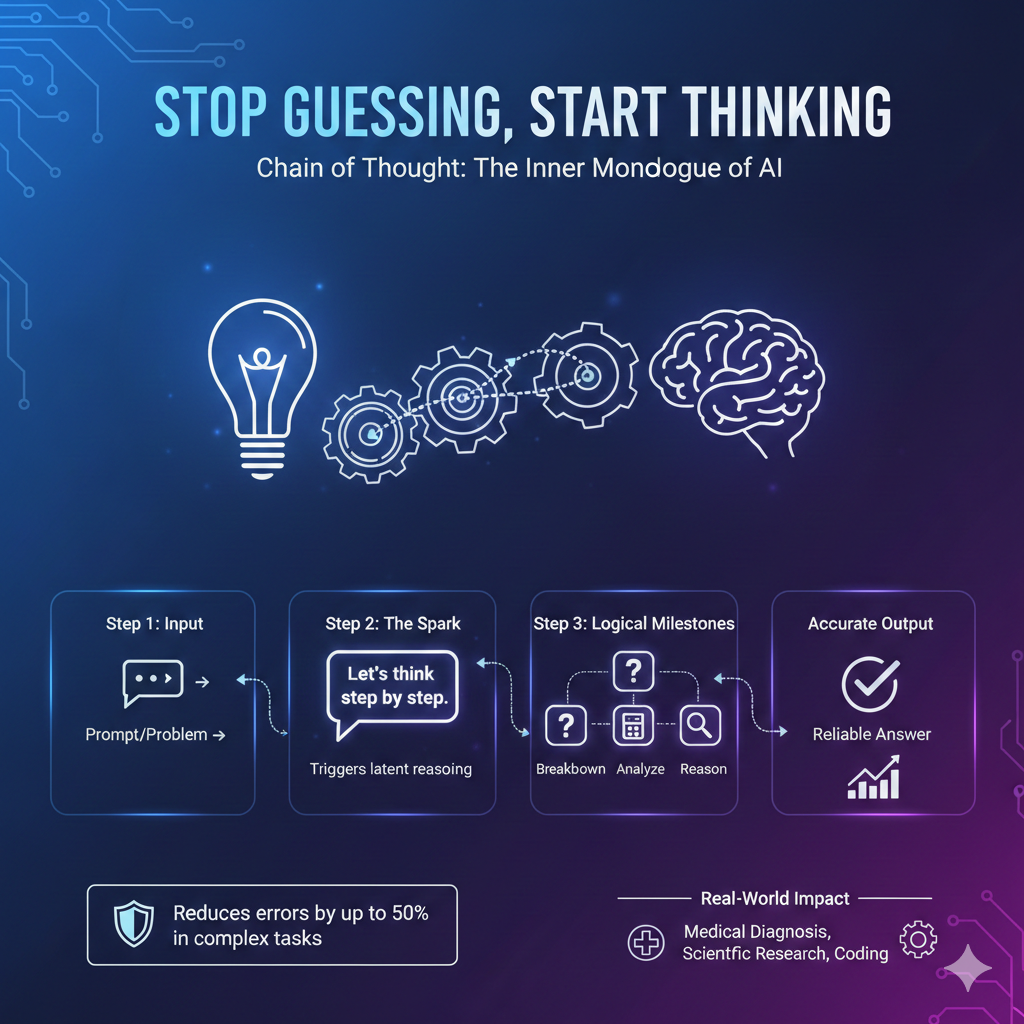

Format 1: Educational Infographic

Visual explanation of the "Inner Monologue" and the "Input -> Spark -> Milestone -> Output" flow.

Format 2: The "Detective" Story

Imagine a Detective (the User) interviewing a Witness (the AI).

- Without CoT: The Detective asks "Who is the killer?" and the Witness blurts out "The Butler!" without explaining why. The Detective cannot trust this.

- With CoT: The Detective says, "Walk me through the night. Who was in the hall? Was the door locked? Why was there mud on the rug?"

Result: By recounting the sequence, the Witness realizes the Butler couldn't have done it because he was in the kitchen. The truth is found through the Chain of Thought.

Quizzes and Exercises

Knowledge Check

1. What is the primary trigger phrase for Zero-Shot CoT?

2. Why does CoT improve accuracy in complex math?

3. True or False: CoT is only useful for math problems.

Exercise: The Recipe Architect

ChallengeScenario

You have leftover chicken, chickpeas, and pesto. You have 15 minutes to cook.

Task

Craft a CoT prompt that asks the AI to first analyze the cooking times of each ingredient and second suggest a 15-minute meal plan based on those times.

Analysis Goal: Observe how the AI prioritizes the ingredients (e.g., since chicken is already cooked, it should be added last to avoid drying out).